Compare commits

153 Commits

v3.1-beta1

...

v3.2-alpha

| Author | SHA1 | Date | |

|---|---|---|---|

| 89ed1e012d | |||

| ff9beae427 | |||

| 302a9ecd86 | |||

| c0086f8953 | |||

| ddd82b935b | |||

| 51d6731aa8 | |||

| 36f2b672bd | |||

| 81a785b360 | |||

| 670532ef31 | |||

| dd55ca4079 | |||

| f66957d867 | |||

| 69975b37fb | |||

| c299626d18 | |||

| 7b4f10080f | |||

| 787e3dba9c | |||

| 86d504722c | |||

| 6791bc4abd | |||

| db5186bf38 | |||

| d2b183bb27 | |||

| 033fcf68f7 | |||

| 14d45667de | |||

| f2a3221911 | |||

| 8baee52ab1 | |||

| d114f63f29 | |||

| b36b64cc94 | |||

| 5a70172a50 | |||

| f635e8cd67 | |||

| 0e362e5d89 | |||

| f2ab2938b0 | |||

| 2d96d13125 | |||

| 883984fda3 | |||

| db2625b08c | |||

| e11c332808 | |||

| 07cb7cfad4 | |||

| 7b4a986f13 | |||

| be2474bb1c | |||

| 81e7cd940c | |||

| 0b4448798e | |||

| b1689f5066 | |||

| dcb9cdac44 | |||

| 9dc280abad | |||

| 6b8c683315 | |||

| 66e123849b | |||

| 7325e1e351 | |||

| 9f4ea51622 | |||

| 8c1058a808 | |||

| d9e759a3eb | |||

| 46457b3aca | |||

| 59f7ccc352 | |||

| 578fb1be4b | |||

| f9b16c050b | |||

| 2ba6fe5235 | |||

| 8e2c91735a | |||

| d57e3922a0 | |||

| 4b25dd76f1 | |||

| 2843781aa6 | |||

| ce987328d9 | |||

| 9a902f0f38 | |||

| ee2c074539 | |||

| 77f1c16414 | |||

| c5363a1538 | |||

| 119225ba5b | |||

| 84437ee1d0 | |||

| 1286bfafd0 | |||

| 9fc2703638 | |||

| 01dc65af96 | |||

| 082153e0ce | |||

| 77f5474447 | |||

| 55ff14f1d8 | |||

| 2acd26b304 | |||

| ec9459c1d2 | |||

| 233fd83ded | |||

| 37c24e092c | |||

| b2bf11382c | |||

| 19b918044e | |||

| 67d9240e7b | |||

| 1a5e4a9cdd | |||

| 31f8c359ff | |||

| b50b7b7563 | |||

| 37f91e1e08 | |||

| a2f3aee5b1 | |||

| 75d0a3cc7e | |||

| 98c55e2aa8 | |||

| d478e22111 | |||

| 3a4953fbc5 | |||

| 8d4e041a9c | |||

| 8725d56bc9 | |||

| ab0bfdbf4e | |||

| ea9012e476 | |||

| 97e3c110b3 | |||

| 9264e8de6d | |||

| 830ccf1bd4 | |||

| a389e4c81c | |||

| 36a66fbafc | |||

| b70c9986c7 | |||

| 664ea32c96 | |||

| 30f30babea | |||

| 5e04aabf37 | |||

| 59d53e9664 | |||

| 171f0ac5ad | |||

| 0ce3bf1297 | |||

| c682665888 | |||

| 086cfe570b | |||

| 521d1078bd | |||

| 8ea178af1f | |||

| 3e39e1553e | |||

| f0cc2bca2a | |||

| 59b0c23a20 | |||

| 401a3f73cc | |||

| 8ec5ed2f4f | |||

| 8318b2f9bf | |||

| 72b97ab2e8 | |||

| a16a038f0e | |||

| fc0da9d380 | |||

| 31be12c0bf | |||

| 176f04b302 | |||

| 7696d8c16d | |||

| 190a73ec10 | |||

| 2bf015e127 | |||

| 671eda7386 | |||

| 3d4b26cec3 | |||

| c0ea311e18 | |||

| b7b2723b2e | |||

| ec1d3ff93e | |||

| 352d5e6094 | |||

| 488ff6f551 | |||

| f52b8bbf58 | |||

| e47d461999 | |||

| a920744b1e | |||

| 63f423a201 | |||

| db6523f3c0 | |||

| 6b172dce2d | |||

| 85d493469d | |||

| bef3be4955 | |||

| f9719ba87e | |||

| 4b97f789df | |||

| ed7cd41ad7 | |||

| 62e19d97c2 | |||

| 594a2664c4 | |||

| d8fbc96be6 | |||

| 61bb590112 | |||

| 86ea5e49f4 | |||

| 01642365c7 | |||

| 4910b1dfb5 | |||

| 966df73d2f | |||

| 69ed827c0d | |||

| e79f6ac157 | |||

| 59efd070a1 | |||

| 80c1bdad1c | |||

| cf72de7c28 | |||

| 686bb48bda | |||

| 6a48b8a2a9 | |||

| 477b66c342 |

5

.github/FUNDING.yml

vendored

Normal file

5

.github/FUNDING.yml

vendored

Normal file

@ -0,0 +1,5 @@

|

||||

# These are supported funding model platforms

|

||||

|

||||

github: psy0rz

|

||||

ko_fi: psy0rz

|

||||

custom: https://paypal.me/psy0rz

|

||||

12

.github/workflows/regression.yml

vendored

12

.github/workflows/regression.yml

vendored

@ -17,7 +17,7 @@ jobs:

|

||||

|

||||

|

||||

- name: Prepare

|

||||

run: sudo apt update && sudo apt install zfsutils-linux && sudo -H pip3 install coverage unittest2 mock==3.0.5 coveralls

|

||||

run: sudo apt update && sudo apt install zfsutils-linux lzop pigz zstd gzip xz-utils lz4 mbuffer && sudo -H pip3 install coverage unittest2 mock==3.0.5 coveralls

|

||||

|

||||

|

||||

- name: Regression test

|

||||

@ -27,7 +27,7 @@ jobs:

|

||||

- name: Coveralls

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

run: coveralls --service=github

|

||||

run: coveralls --service=github || true

|

||||

|

||||

ubuntu18:

|

||||

runs-on: ubuntu-18.04

|

||||

@ -39,7 +39,7 @@ jobs:

|

||||

|

||||

|

||||

- name: Prepare

|

||||

run: sudo apt update && sudo apt install zfsutils-linux python3-setuptools && sudo -H pip3 install coverage unittest2 mock==3.0.5 coveralls

|

||||

run: sudo apt update && sudo apt install zfsutils-linux python3-setuptools lzop pigz zstd gzip xz-utils liblz4-tool mbuffer && sudo -H pip3 install coverage unittest2 mock==3.0.5 coveralls

|

||||

|

||||

|

||||

- name: Regression test

|

||||

@ -49,7 +49,7 @@ jobs:

|

||||

- name: Coveralls

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

run: coveralls --service=github

|

||||

run: coveralls --service=github || true

|

||||

|

||||

ubuntu18_python2:

|

||||

runs-on: ubuntu-18.04

|

||||

@ -64,7 +64,7 @@ jobs:

|

||||

python-version: '2.x'

|

||||

|

||||

- name: Prepare

|

||||

run: sudo apt update && sudo apt install zfsutils-linux python-setuptools && sudo -H pip install coverage unittest2 mock==3.0.5 coveralls

|

||||

run: sudo apt update && sudo apt install zfsutils-linux python-setuptools lzop pigz zstd gzip xz-utils liblz4-tool mbuffer && sudo -H pip install coverage unittest2 mock==3.0.5 coveralls colorama

|

||||

|

||||

- name: Regression test

|

||||

run: sudo -E ./tests/run_tests

|

||||

@ -73,4 +73,4 @@ jobs:

|

||||

env:

|

||||

GITHUB_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

COVERALLS_REPO_TOKEN: ${{ secrets.GITHUB_TOKEN }}

|

||||

run: coveralls --service=github

|

||||

run: coveralls --service=github || true

|

||||

|

||||

1

.gitignore

vendored

1

.gitignore

vendored

@ -11,3 +11,4 @@ __pycache__

|

||||

python2.env

|

||||

venv

|

||||

.idea

|

||||

password.sh

|

||||

|

||||

653

README.md

653

README.md

@ -5,7 +5,7 @@

|

||||

|

||||

## Introduction

|

||||

|

||||

This is a tool I wrote to make replicating ZFS datasets easy and reliable.

|

||||

ZFS-autobackup tries to be the most reliable and easiest to use tool, while having all the features.

|

||||

|

||||

You can either use it as a **backup** tool, **replication** tool or **snapshot** tool.

|

||||

|

||||

@ -13,657 +13,46 @@ You can select what to backup by setting a custom `ZFS property`. This makes it

|

||||

|

||||

Other settings are just specified on the commandline: Simply setup and test your zfs-autobackup command and fix all the issues you might encounter. When you're done you can just copy/paste your command to a cron or script.

|

||||

|

||||

Since its using ZFS commands, you can see what its actually doing by specifying `--debug`. This also helps a lot if you run into some strange problem or error. You can just copy-paste the command that fails and play around with it on the commandline. (something I missed in other tools)

|

||||

Since its using ZFS commands, you can see what it's actually doing by specifying `--debug`. This also helps a lot if you run into some strange problem or error. You can just copy-paste the command that fails and play around with it on the commandline. (something I missed in other tools)

|

||||

|

||||

An important feature thats missing from other tools is a reliable `--test` option: This allows you to see what zfs-autobackup will do and tune your parameters. It will do everything, except make changes to your system.

|

||||

|

||||

zfs-autobackup tries to be the easiest to use backup tool for zfs.

|

||||

|

||||

## Features

|

||||

|

||||

* Works across operating systems: Tested with **Linux**, **FreeBSD/FreeNAS** and **SmartOS**.

|

||||

* Low learning curve: no complex daemons or services, no additional software or networking needed. (Only read this page)

|

||||

* Plays nicely with existing replication systems. (Like Proxmox HA)

|

||||

* Automatically selects filesystems to backup by looking at a simple ZFS property. (recursive)

|

||||

* Automatically selects filesystems to backup by looking at a simple ZFS property.

|

||||

* Creates consistent snapshots. (takes all snapshots at once, atomicly.)

|

||||

* Multiple backups modes:

|

||||

* Backup local data on the same server.

|

||||

* "push" local data to a backup-server via SSH.

|

||||

* "pull" remote data from a server via SSH and backup it locally.

|

||||

* Or even pull data from a server while pushing the backup to another server. (Zero trust between source and target server)

|

||||

* Can be scheduled via a simple cronjob or run directly from commandline.

|

||||

* Supports resuming of interrupted transfers.

|

||||

* "pull+push": Zero trust between source and target.

|

||||

* Can be scheduled via simple cronjob or run directly from commandline.

|

||||

* ZFS encryption support: Can decrypt / encrypt or even re-encrypt datasets during transfer.

|

||||

* Supports sending with compression. (Using pigz, zstd etc)

|

||||

* IO buffering to speed up transfer.

|

||||

* Bandwidth rate limiting.

|

||||

* Multiple backups from and to the same datasets are no problem.

|

||||

* Creates the snapshot before doing anything else. (assuring you at least have a snapshot if all else fails)

|

||||

* Checks everything but tries continue on non-fatal errors when possible. (Reports error-count when done)

|

||||

* Resillient to errors.

|

||||

* Ability to manually 'finish' failed backups to see whats going on.

|

||||

* Easy to debug and has a test-mode. Actual unix commands are printed.

|

||||

* Uses **progressive thinning** for older snapshots.

|

||||

* Uses zfs-holds on important snapshots so they cant be accidentally destroyed.

|

||||

* Uses progressive thinning for older snapshots.

|

||||

* Uses zfs-holds on important snapshots to prevent accidental deletion.

|

||||

* Automatic resuming of failed transfers.

|

||||

* Can continue from existing common snapshots. (e.g. easy migration)

|

||||

* Gracefully handles destroyed datasets on source.

|

||||

* Easy migration from existing zfs backups.

|

||||

* Gracefully handles datasets that no longer exist on source.

|

||||

* Complete and clean logging.

|

||||

* Easy installation:

|

||||

* Just install zfs-autobackup via pip, or download it manually.

|

||||

* Written in python and uses zfs-commands, no 3rd party dependency's or libraries needed.

|

||||

* No separate config files or properties. Just one zfs-autobackup command you can copy/paste in your backup script.

|

||||

* Just install zfs-autobackup via pip.

|

||||

* Only needs to be installed on one side.

|

||||

* Written in python and uses zfs-commands, no special 3rd party dependency's or compiled libraries needed.

|

||||

* No annoying config files or properties.

|

||||

|

||||

## Installation

|

||||

## Getting started

|

||||

|

||||

### Using pip

|

||||

|

||||

The recommended way on most servers is to use [pip](https://pypi.org/project/zfs-autobackup/):

|

||||

|

||||

```console

|

||||

[root@server ~]# pip install --upgrade zfs-autobackup

|

||||

```

|

||||

|

||||

This can also be used to upgrade zfs-autobackup to the newest stable version.

|

||||

|

||||

### Using easy_install

|

||||

|

||||

On older servers you might have to use easy_install

|

||||

|

||||

```console

|

||||

[root@server ~]# easy_install zfs-autobackup

|

||||

```

|

||||

|

||||

## Example

|

||||

|

||||

In this example we're going to backup a machine called `server1` to a machine called `backup`.

|

||||

|

||||

### Setup SSH login

|

||||

|

||||

zfs-autobackup needs passwordless login via ssh. This means generating an ssh key and copying it to the remote server.

|

||||

|

||||

#### Generate SSH key on `backup`

|

||||

|

||||

On the backup-server that runs zfs-autobackup you need to create an SSH key. You only need to do this once.

|

||||

|

||||

Use the `ssh-keygen` command and leave the passphrase empty:

|

||||

|

||||

```console

|

||||

root@backup:~# ssh-keygen

|

||||

Generating public/private rsa key pair.

|

||||

Enter file in which to save the key (/root/.ssh/id_rsa):

|

||||

Enter passphrase (empty for no passphrase):

|

||||

Enter same passphrase again:

|

||||

Your identification has been saved in /root/.ssh/id_rsa.

|

||||

Your public key has been saved in /root/.ssh/id_rsa.pub.

|

||||

The key fingerprint is:

|

||||

SHA256:McJhCxvaxvFhO/3e8Lf5gzSrlTWew7/bwrd2U2EHymE root@backup

|

||||

The key's randomart image is:

|

||||

+---[RSA 2048]----+

|

||||

| + = |

|

||||

| + X * E . |

|

||||

| . = B + o o . |

|

||||

| . o + o o.|

|

||||

| S o .oo|

|

||||

| . + o= +|

|

||||

| . ++==.|

|

||||

| .+o**|

|

||||

| .. +B@|

|

||||

+----[SHA256]-----+

|

||||

root@backup:~#

|

||||

```

|

||||

|

||||

#### Copy SSH key to `server1`

|

||||

|

||||

Now you need to copy the public part of the key to `server1`

|

||||

|

||||

The `ssh-copy-id` command is a handy tool to automate this. It will just ask for your password.

|

||||

|

||||

```console

|

||||

root@backup:~# ssh-copy-id root@server1.server.com

|

||||

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

|

||||

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

|

||||

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

|

||||

Password:

|

||||

|

||||

Number of key(s) added: 1

|

||||

|

||||

Now try logging into the machine, with: "ssh 'root@server1.server.com'"

|

||||

and check to make sure that only the key(s) you wanted were added.

|

||||

|

||||

root@backup:~#

|

||||

```

|

||||

This allows the backup-server to login to `server1` as root without password.

|

||||

|

||||

### Select filesystems to backup

|

||||

|

||||

Its important to choose a unique and consistent backup name. In this case we name our backup: `offsite1`.

|

||||

|

||||

On the source zfs system set the ```autobackup:offsite1``` zfs property to true:

|

||||

|

||||

```console

|

||||

[root@server1 ~]# zfs set autobackup:offsite1=true rpool

|

||||

[root@server1 ~]# zfs get -t filesystem,volume autobackup:offsite1

|

||||

NAME PROPERTY VALUE SOURCE

|

||||

rpool autobackup:offsite1 true local

|

||||

rpool/ROOT autobackup:offsite1 true inherited from rpool

|

||||

rpool/ROOT/server1-1 autobackup:offsite1 true inherited from rpool

|

||||

rpool/data autobackup:offsite1 true inherited from rpool

|

||||

rpool/data/vm-100-disk-0 autobackup:offsite1 true inherited from rpool

|

||||

rpool/swap autobackup:offsite1 true inherited from rpool

|

||||

...

|

||||

```

|

||||

|

||||

ZFS properties are ```inherited``` by child datasets. Since we've set the property on the highest dataset, we're essentially backupping the whole pool.

|

||||

|

||||

Because we don't want to backup everything, we can exclude certain filesystem by setting the property to false:

|

||||

|

||||

```console

|

||||

[root@server1 ~]# zfs set autobackup:offsite1=false rpool/swap

|

||||

[root@server1 ~]# zfs get -t filesystem,volume autobackup:offsite1

|

||||

NAME PROPERTY VALUE SOURCE

|

||||

rpool autobackup:offsite1 true local

|

||||

rpool/ROOT autobackup:offsite1 true inherited from rpool

|

||||

rpool/ROOT/server1-1 autobackup:offsite1 true inherited from rpool

|

||||

rpool/data autobackup:offsite1 true inherited from rpool

|

||||

rpool/data/vm-100-disk-0 autobackup:offsite1 true inherited from rpool

|

||||

rpool/swap autobackup:offsite1 false local

|

||||

...

|

||||

```

|

||||

|

||||

The autobackup-property can have 3 values:

|

||||

* ```true```: Backup the dataset and all its children

|

||||

* ```false```: Dont backup the dataset and all its children. (used to exclude certain datasets)

|

||||

* ```child```: Only backup the children off the dataset, not the dataset itself.

|

||||

|

||||

Only use the zfs-command to set these properties, not the zpool command.

|

||||

|

||||

### Running zfs-autobackup

|

||||

|

||||

Run the script on the backup server and pull the data from the server specified by --ssh-source.

|

||||

|

||||

```console

|

||||

[root@backup ~]# zfs-autobackup --ssh-source server1.server.com offsite1 backup/server1 --progress --verbose

|

||||

|

||||

#### Settings summary

|

||||

[Source] Datasets on: server1.server.com

|

||||

[Source] Keep the last 10 snapshots.

|

||||

[Source] Keep every 1 day, delete after 1 week.

|

||||

[Source] Keep every 1 week, delete after 1 month.

|

||||

[Source] Keep every 1 month, delete after 1 year.

|

||||

[Source] Send all datasets that have 'autobackup:offsite1=true' or 'autobackup:offsite1=child'

|

||||

|

||||

[Target] Datasets are local

|

||||

[Target] Keep the last 10 snapshots.

|

||||

[Target] Keep every 1 day, delete after 1 week.

|

||||

[Target] Keep every 1 week, delete after 1 month.

|

||||

[Target] Keep every 1 month, delete after 1 year.

|

||||

[Target] Receive datasets under: backup/server1

|

||||

|

||||

#### Selecting

|

||||

[Source] rpool: Selected (direct selection)

|

||||

[Source] rpool/ROOT: Selected (inherited selection)

|

||||

[Source] rpool/ROOT/server1-1: Selected (inherited selection)

|

||||

[Source] rpool/data: Selected (inherited selection)

|

||||

[Source] rpool/data/vm-100-disk-0: Selected (inherited selection)

|

||||

[Source] rpool/swap: Ignored (disabled)

|

||||

|

||||

#### Snapshotting

|

||||

[Source] rpool: No changes since offsite1-20200218175435

|

||||

[Source] rpool/ROOT: No changes since offsite1-20200218175435

|

||||

[Source] rpool/data: No changes since offsite1-20200218175435

|

||||

[Source] Creating snapshot offsite1-20200218180123

|

||||

|

||||

#### Sending and thinning

|

||||

[Target] backup/server1/rpool/ROOT/server1-1@offsite1-20200218175435: receiving full

|

||||

[Target] backup/server1/rpool/ROOT/server1-1@offsite1-20200218175547: receiving incremental

|

||||

[Target] backup/server1/rpool/ROOT/server1-1@offsite1-20200218175706: receiving incremental

|

||||

[Target] backup/server1/rpool/ROOT/server1-1@offsite1-20200218180049: receiving incremental

|

||||

[Target] backup/server1/rpool/ROOT/server1-1@offsite1-20200218180123: receiving incremental

|

||||

[Target] backup/server1/rpool/data@offsite1-20200218175435: receiving full

|

||||

[Target] backup/server1/rpool/data/vm-100-disk-0@offsite1-20200218175435: receiving full

|

||||

...

|

||||

```

|

||||

|

||||

Note that this is called a "pull" backup: The backup server pulls the backup from the server. This is usually the preferred way.

|

||||

|

||||

Its also possible to let a server push its backup to the backup-server. However this has security implications. In that case you would setup the SSH keys the other way around and use the --ssh-target parameter on the server.

|

||||

|

||||

### Automatic backups

|

||||

|

||||

Now every time you run the command, zfs-autobackup will create a new snapshot and replicate your data.

|

||||

|

||||

Older snapshots will eventually be deleted, depending on the `--keep-source` and `--keep-target` settings. (The defaults are shown above under the 'Settings summary')

|

||||

|

||||

Once you've got the correct settings for your situation, you can just store the command in a cronjob.

|

||||

|

||||

Or just create a script and run it manually when you need it.

|

||||

|

||||

## Use as snapshot tool

|

||||

|

||||

You can use zfs-autobackup to only make snapshots.

|

||||

|

||||

Just dont specify the target-path:

|

||||

```console

|

||||

root@ws1:~# zfs-autobackup test --verbose

|

||||

zfs-autobackup v3.0 - Copyright 2020 E.H.Eefting (edwin@datux.nl)

|

||||

|

||||

#### Source settings

|

||||

[Source] Datasets are local

|

||||

[Source] Keep the last 10 snapshots.

|

||||

[Source] Keep every 1 day, delete after 1 week.

|

||||

[Source] Keep every 1 week, delete after 1 month.

|

||||

[Source] Keep every 1 month, delete after 1 year.

|

||||

[Source] Selects all datasets that have property 'autobackup:test=true' (or childs of datasets that have 'autobackup:test=child')

|

||||

|

||||

#### Selecting

|

||||

[Source] test_source1/fs1: Selected (direct selection)

|

||||

[Source] test_source1/fs1/sub: Selected (inherited selection)

|

||||

[Source] test_source2/fs2: Ignored (only childs)

|

||||

[Source] test_source2/fs2/sub: Selected (inherited selection)

|

||||

|

||||

#### Snapshotting

|

||||

[Source] Creating snapshots test-20200710125958 in pool test_source1

|

||||

[Source] Creating snapshots test-20200710125958 in pool test_source2

|

||||

|

||||

#### Thinning source

|

||||

[Source] test_source1/fs1@test-20200710125948: Destroying

|

||||

[Source] test_source1/fs1/sub@test-20200710125948: Destroying

|

||||

[Source] test_source2/fs2/sub@test-20200710125948: Destroying

|

||||

|

||||

#### All operations completed successfully

|

||||

(No target_path specified, only operated as snapshot tool.)

|

||||

```

|

||||

|

||||

This also allows you to make several snapshots during the day, but only backup the data at night when the server is not busy.

|

||||

|

||||

## Thinning out obsolete snapshots

|

||||

|

||||

The thinner is the thing that destroys old snapshots on the source and target.

|

||||

|

||||

The thinner operates "stateless": There is nothing in the name or properties of a snapshot that indicates how long it will be kept. Everytime zfs-autobackup runs, it will look at the timestamp of all the existing snapshots. From there it will determine which snapshots are obsolete according to your schedule. The advantage of this stateless system is that you can always change the schedule.

|

||||

|

||||

Note that the thinner will ONLY destroy snapshots that are matching the naming pattern of zfs-autobackup. If you use `--other-snapshots`, it wont destroy those snapshots after replicating them to the target.

|

||||

|

||||

### Destroying missing datasets

|

||||

|

||||

When a dataset has been destroyed or deselected on the source, but still exists on the target we call it a missing dataset. Missing datasets will be still thinned out according to the schedule.

|

||||

|

||||

The final snapshot will never be destroyed, unless you specify a **deadline** with the `--destroy-missing` option:

|

||||

|

||||

In that case it will look at the last snapshot we took and determine if is older than the deadline you specified. e.g: `--destroy-missing 30d` will start destroying things 30 days after the last snapshot.

|

||||

|

||||

#### After the deadline

|

||||

|

||||

When the deadline is passed, all our snapshots, except the last one will be destroyed. Irregardless of the normal thinning schedule.

|

||||

|

||||

The dataset has to have the following properties to be finally really destroyed:

|

||||

|

||||

* The dataset has no direct child-filesystems or volumes.

|

||||

* The only snapshot left is the last one created by zfs-autobackup.

|

||||

* The remaining snapshot has no clones.

|

||||

|

||||

### Thinning schedule

|

||||

|

||||

The default thinning schedule is: `10,1d1w,1w1m,1m1y`.

|

||||

|

||||

The schedule consists of multiple rules separated by a `,`

|

||||

|

||||

A plain number specifies how many snapshots you want to always keep, regardless of time or interval.

|

||||

|

||||

The format of the other rules is: `<Interval><TTL>`.

|

||||

|

||||

* Interval: The minimum interval between the snapshots. Snapshots with intervals smaller than this will be destroyed.

|

||||

* TTL: The maximum time to life time of a snapshot, after that they will be destroyed.

|

||||

* These are the time units you can use for interval and TTL:

|

||||

* `y`: Years

|

||||

* `m`: Months

|

||||

* `d`: Days

|

||||

* `h`: Hours

|

||||

* `min`: Minutes

|

||||

* `s`: Seconds

|

||||

|

||||

Since this might sound very complicated, the `--verbose` option will show you what it all means:

|

||||

|

||||

```console

|

||||

[Source] Keep the last 10 snapshots.

|

||||

[Source] Keep every 1 day, delete after 1 week.

|

||||

[Source] Keep every 1 week, delete after 1 month.

|

||||

[Source] Keep every 1 month, delete after 1 year.

|

||||

```

|

||||

|

||||

A snapshot will only be destroyed if it not needed anymore by ANY of the rules.

|

||||

|

||||

You can specify as many rules as you need. The order of the rules doesn't matter.

|

||||

|

||||

Keep in mind its up to you to actually run zfs-autobackup often enough: If you want to keep hourly snapshots, you have to make sure you at least run it every hour.

|

||||

|

||||

However, its no problem if you run it more or less often than that: The thinner will still keep an optimal set of snapshots to match your schedule as good as possible.

|

||||

|

||||

If you want to keep as few snapshots as possible, just specify 0. (`--keep-source=0` for example)

|

||||

|

||||

If you want to keep ALL the snapshots, just specify a very high number.

|

||||

|

||||

### More details about the Thinner

|

||||

|

||||

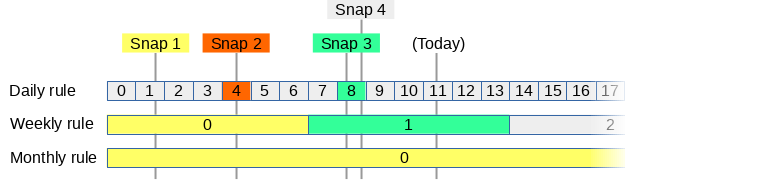

We will give a practical example of how the thinner operates.

|

||||

|

||||

Say we want have 3 thinner rules:

|

||||

|

||||

* We want to keep daily snapshots for 7 days.

|

||||

* We want to keep weekly snapshots for 4 weeks.

|

||||

* We want to keep monthly snapshots for 12 months.

|

||||

|

||||

So far we have taken 4 snapshots at random moments:

|

||||

|

||||

|

||||

|

||||

For every rule, the thinner will divide the timeline in blocks and assign each snapshot to a block.

|

||||

|

||||

A block can only be assigned one snapshot: If multiple snapshots fall into the same block, it only assigns it to the oldest that we want to keep.

|

||||

|

||||

The colors show to which block a snapshot belongs:

|

||||

|

||||

* Snapshot 1: This snapshot belongs to daily block 1, weekly block 0 and monthly block 0. However the daily block is too old.

|

||||

* Snapshot 2: Since weekly block 0 and monthly block 0 already have a snapshot, it only belongs to daily block 4.

|

||||

* Snapshot 3: This snapshot belongs to daily block 8 and weekly block 1.

|

||||

* Snapshot 4: Since daily block 8 already has a snapshot, this one doesn't belong to anything and can be deleted right away. (it will be keeped for now since its the last snapshot)

|

||||

|

||||

zfs-autobackup will re-evaluate this on every run: As soon as a snapshot doesn't belong to any block anymore it will be destroyed.

|

||||

|

||||

Snapshots on the source that still have to be send to the target wont be destroyed off course. (If the target still wants them, according to the target schedule)

|

||||

|

||||

## Tips

|

||||

|

||||

* Use ```--debug``` if something goes wrong and you want to see the commands that are executed. This will also stop at the first error.

|

||||

* You can split up the snapshotting and sending tasks by creating two cronjobs. Create a separate snapshotter-cronjob by just omitting target-path.

|

||||

* Set the ```readonly``` property of the target filesystem to ```on```. This prevents changes on the target side. (Normally, if there are changes the next backup will fail and will require a zfs rollback.) Note that readonly means you cant change the CONTENTS of the dataset directly. Its still possible to receive new datasets and manipulate properties etc.

|

||||

* Use ```--clear-refreservation``` to save space on your backup server.

|

||||

* Use ```--clear-mountpoint``` to prevent the target server from mounting the backupped filesystem in the wrong place during a reboot.

|

||||

|

||||

### Performance tips

|

||||

|

||||

If you have a large number of datasets its important to keep the following tips in mind.

|

||||

|

||||

#### Some statistics

|

||||

|

||||

To get some idea of how fast zfs-autobackup is, I did some test on my laptop, with a SKHynix_HFS512GD9TNI-L2B0B disk. I'm using zfs 2.0.2.

|

||||

|

||||

I created 100 empty datasets and measured the total runtime of zfs-autobackup. I used all the performance tips below. (--no-holds, --allow-empty, ssh ControlMaster)

|

||||

|

||||

* without ssh: 15 seconds. (>6 datasets/s)

|

||||

* either ssh-target or ssh-source=localhost: 20 seconds (5 datasets/s)

|

||||

* both ssh-target and ssh-source=localhost: 24 seconds (4 datasets/s)

|

||||

|

||||

To be bold I created 2500 datasets, but that also was no problem. So it seems it should be possible to use zfs-autobackup with thousands of datasets.

|

||||

|

||||

If you need more performance let me know.

|

||||

|

||||

NOTE: There is actually a performance regression in ZFS version 2: https://github.com/openzfs/zfs/issues/11560 Use --no-progress as workaround.

|

||||

|

||||

#### Less work

|

||||

|

||||

You can make zfs-autobackup generate less work by using --no-holds and --allow-empty.

|

||||

|

||||

This saves a lot of extra zfs-commands per dataset.

|

||||

|

||||

#### Speeding up SSH

|

||||

|

||||

You can make your ssh connections persistent and greatly speed up zfs-autobackup:

|

||||

|

||||

On the backup-server add this to your ~/.ssh/config:

|

||||

|

||||

```console

|

||||

Host *

|

||||

ControlPath ~/.ssh/control-master-%r@%h:%p

|

||||

ControlMaster auto

|

||||

ControlPersist 3600

|

||||

```

|

||||

|

||||

Thanks @mariusvw :)

|

||||

|

||||

### Specifying ssh port or options

|

||||

|

||||

The correct way to do this is by creating ~/.ssh/config:

|

||||

|

||||

```console

|

||||

Host smartos04

|

||||

Hostname 1.2.3.4

|

||||

Port 1234

|

||||

user root

|

||||

Compression yes

|

||||

```

|

||||

|

||||

This way you can just specify "smartos04" as host.

|

||||

|

||||

Also uses compression on slow links.

|

||||

|

||||

Look in man ssh_config for many more options.

|

||||

|

||||

## Usage

|

||||

|

||||

Here you find all the options:

|

||||

|

||||

```console

|

||||

[root@server ~]# zfs-autobackup --help

|

||||

usage: zfs-autobackup [-h] [--ssh-config SSH_CONFIG] [--ssh-source SSH_SOURCE]

|

||||

[--ssh-target SSH_TARGET] [--keep-source KEEP_SOURCE]

|

||||

[--keep-target KEEP_TARGET] [--other-snapshots]

|

||||

[--no-snapshot] [--no-send] [--min-change MIN_CHANGE]

|

||||

[--allow-empty] [--ignore-replicated] [--no-holds]

|

||||

[--strip-path STRIP_PATH] [--clear-refreservation]

|

||||

[--clear-mountpoint]

|

||||

[--filter-properties FILTER_PROPERTIES]

|

||||

[--set-properties SET_PROPERTIES] [--rollback]

|

||||

[--destroy-incompatible] [--ignore-transfer-errors]

|

||||

[--raw] [--test] [--verbose] [--debug] [--debug-output]

|

||||

[--progress]

|

||||

backup-name [target-path]

|

||||

|

||||

zfs-autobackup v3.0-rc12 - Copyright 2020 E.H.Eefting (edwin@datux.nl)

|

||||

|

||||

positional arguments:

|

||||

backup-name Name of the backup (you should set the zfs property

|

||||

"autobackup:backup-name" to true on filesystems you

|

||||

want to backup

|

||||

target-path Target ZFS filesystem (optional: if not specified,

|

||||

zfs-autobackup will only operate as snapshot-tool on

|

||||

source)

|

||||

|

||||

optional arguments:

|

||||

-h, --help show this help message and exit

|

||||

--ssh-config SSH_CONFIG

|

||||

Custom ssh client config

|

||||

--ssh-source SSH_SOURCE

|

||||

Source host to get backup from. (user@hostname)

|

||||

Default None.

|

||||

--ssh-target SSH_TARGET

|

||||

Target host to push backup to. (user@hostname) Default

|

||||

None.

|

||||

--keep-source KEEP_SOURCE

|

||||

Thinning schedule for old source snapshots. Default:

|

||||

10,1d1w,1w1m,1m1y

|

||||

--keep-target KEEP_TARGET

|

||||

Thinning schedule for old target snapshots. Default:

|

||||

10,1d1w,1w1m,1m1y

|

||||

--other-snapshots Send over other snapshots as well, not just the ones

|

||||

created by this tool.

|

||||

--no-snapshot Don't create new snapshots (useful for finishing

|

||||

uncompleted backups, or cleanups)

|

||||

--no-send Don't send snapshots (useful for cleanups, or if you

|

||||

want a serperate send-cronjob)

|

||||

--min-change MIN_CHANGE

|

||||

Number of bytes written after which we consider a

|

||||

dataset changed (default 1)

|

||||

--allow-empty If nothing has changed, still create empty snapshots.

|

||||

(same as --min-change=0)

|

||||

--ignore-replicated Ignore datasets that seem to be replicated some other

|

||||

way. (No changes since lastest snapshot. Useful for

|

||||

proxmox HA replication)

|

||||

--no-holds Don't lock snapshots on the source. (Useful to allow

|

||||

proxmox HA replication to switches nodes)

|

||||

--strip-path STRIP_PATH

|

||||

Number of directories to strip from target path (use 1

|

||||

when cloning zones between 2 SmartOS machines)

|

||||

--clear-refreservation

|

||||

Filter "refreservation" property. (recommended, safes

|

||||

space. same as --filter-properties refreservation)

|

||||

--clear-mountpoint Set property canmount=noauto for new datasets.

|

||||

(recommended, prevents mount conflicts. same as --set-

|

||||

properties canmount=noauto)

|

||||

--filter-properties FILTER_PROPERTIES

|

||||

List of properties to "filter" when receiving

|

||||

filesystems. (you can still restore them with zfs

|

||||

inherit -S)

|

||||

--set-properties SET_PROPERTIES

|

||||

List of propererties to override when receiving

|

||||

filesystems. (you can still restore them with zfs

|

||||

inherit -S)

|

||||

--rollback Rollback changes to the latest target snapshot before

|

||||

starting. (normally you can prevent changes by setting

|

||||

the readonly property on the target_path to on)

|

||||

--destroy-incompatible

|

||||

Destroy incompatible snapshots on target. Use with

|

||||

care! (implies --rollback)

|

||||

--ignore-transfer-errors

|

||||

Ignore transfer errors (still checks if received

|

||||

filesystem exists. useful for acltype errors)

|

||||

--raw For encrypted datasets, send data exactly as it exists

|

||||

on disk.

|

||||

--test dont change anything, just show what would be done

|

||||

(still does all read-only operations)

|

||||

--verbose verbose output

|

||||

--debug Show zfs commands that are executed, stops after an

|

||||

exception.

|

||||

--debug-output Show zfs commands and their output/exit codes. (noisy)

|

||||

--progress show zfs progress output (to stderr). Enabled by

|

||||

default on ttys.

|

||||

|

||||

When a filesystem fails, zfs_backup will continue and report the number of

|

||||

failures at that end. Also the exit code will indicate the number of failures.

|

||||

```

|

||||

|

||||

## Troubleshooting

|

||||

|

||||

### It keeps asking for my SSH password

|

||||

|

||||

You forgot to setup automatic login via SSH keys, look in the example how to do this.

|

||||

|

||||

### It says 'cannot receive incremental stream: invalid backup stream'

|

||||

|

||||

This usually means you've created a new snapshot on the target side during a backup. If you restart zfs-autobackup, it will automaticly abort the invalid partially received snapshot and start over.

|

||||

|

||||

### It says 'cannot receive incremental stream: destination has been modified since most recent snapshot'

|

||||

|

||||

This means files have been modified on the target side somehow.

|

||||

|

||||

You can use --rollback to automaticly rollback such changes.

|

||||

|

||||

Note: This usually happens if the source-side has a non-standard mountpoint for a dataset, and you're using --clear-mountpoint. In this case the target side creates a mountpoint in the parent dataset, causing the change.

|

||||

|

||||

### It says 'internal error: Invalid argument'

|

||||

|

||||

In some cases (Linux -> FreeBSD) this means certain properties are not fully supported on the target system.

|

||||

|

||||

Try using something like: --filter-properties xattr

|

||||

|

||||

## Restore example

|

||||

|

||||

Restoring can be done with simple zfs commands. For example, use this to restore a specific SmartOS disk image to a temporary restore location:

|

||||

|

||||

```console

|

||||

root@fs1:/home/psy# zfs send fs1/zones/backup/zfsbackups/smartos01.server.com/zones/a3abd6c8-24c6-4125-9e35-192e2eca5908-disk0@smartos01_fs1-20160110000003 | ssh root@2.2.2.2 "zfs recv zones/restore"

|

||||

```

|

||||

|

||||

After that you can rename the disk image from the temporary location to the location of a new SmartOS machine you've created.

|

||||

|

||||

## Monitoring with Zabbix-jobs

|

||||

|

||||

You can monitor backups by using my zabbix-jobs script. (<https://github.com/psy0rz/stuff/tree/master/zabbix-jobs>)

|

||||

|

||||

Put this command directly after the zfs_backup command in your cronjob:

|

||||

|

||||

```console

|

||||

zabbix-job-status backup_smartos01_fs1 daily $?

|

||||

```

|

||||

|

||||

This will update the zabbix server with the exit code and will also alert you if the job didn't run for more than 2 days.

|

||||

|

||||

## Backup a proxmox cluster with HA replication

|

||||

|

||||

Due to the nature of proxmox we had to make a few enhancements to zfs-autobackup. This will probably also benefit other systems that use their own replication in combination with zfs-autobackup.

|

||||

|

||||

All data under rpool/data can be on multiple nodes of the cluster. The naming of those filesystem is unique over the whole cluster. Because of this we should backup rpool/data of all nodes to the same destination. This way we wont have duplicate backups of the filesystems that are replicated. Because of various options, you can even migrate hosts and zfs-autobackup will be fine. (and it will get the next backup from the new node automatically)

|

||||

|

||||

In the example below we have 3 nodes, named pve1, pve2 and pve3.

|

||||

|

||||

### Preparing the proxmox nodes

|

||||

|

||||

No preparation is needed, the script will take care of everything. You only need to setup the ssh keys, so that the backup server can access the proxmox server.

|

||||

|

||||

TIP: make sure your backup server is firewalled and cannot be reached from any production machine.

|

||||

|

||||

### SSH config on backup server

|

||||

|

||||

I use ~/.ssh/config to specify how to reach the various hosts.

|

||||

|

||||

In this example we are making an offsite copy and use portforwarding to reach the proxmox machines:

|

||||

```

|

||||

Host *

|

||||

ControlPath ~/.ssh/control-master-%r@%h:%p

|

||||

ControlMaster auto

|

||||

ControlPersist 3600

|

||||

Compression yes

|

||||

|

||||

Host pve1

|

||||

Hostname some.host.com

|

||||

Port 10001

|

||||

|

||||

Host pve2

|

||||

Hostname some.host.com

|

||||

Port 10002

|

||||

|

||||

Host pve3

|

||||

Hostname some.host.com

|

||||

Port 10003

|

||||

```

|

||||

|

||||

### Backup script

|

||||

|

||||

I use the following backup script on the backup server.

|

||||

|

||||

Adjust the variables HOSTS TARGET and NAME to your needs.

|

||||

|

||||

```shell

|

||||

#!/bin/bash

|

||||

|

||||

HOSTS="pve1 pve2 pve3"

|

||||

TARGET=rpool/pvebackups

|

||||

NAME=prox

|

||||

|

||||

zfs create -p $TARGET/data &>/dev/null

|

||||

for HOST in $HOSTS; do

|

||||

|

||||

echo "################################### RPOOL $HOST"

|

||||

|

||||

# enable backup

|

||||

ssh $HOST "zfs set autobackup:rpool_$NAME=child rpool/ROOT"

|

||||

|

||||

#backup rpool to specific directory per host

|

||||

zfs create -p $TARGET/rpools/$HOST &>/dev/null

|

||||

zfs-autobackup --keep-source=1d1w,1w1m --ssh-source $HOST rpool_$NAME $TARGET/rpools/$HOST --clear-mountpoint --clear-refreservation --ignore-transfer-errors --strip-path 2 --verbose --no-holds $@

|

||||

|

||||

zabbix-job-status backup_$HOST""_rpool_$NAME daily $? >/dev/null 2>/dev/null

|

||||

|

||||

|

||||

echo "################################### DATA $HOST"

|

||||

|

||||

# enable backup

|

||||

ssh $HOST "zfs set autobackup:data_$NAME=child rpool/data"

|

||||

|

||||

#backup data filesystems to a common directory

|

||||

zfs-autobackup --keep-source=1d1w,1w1m --ssh-source $HOST data_$NAME $TARGET/data --clear-mountpoint --clear-refreservation --ignore-transfer-errors --strip-path 2 --verbose --ignore-replicated --min-change 200000 --no-holds $@

|

||||

|

||||

zabbix-job-status backup_$HOST""_data_$NAME daily $? >/dev/null 2>/dev/null

|

||||

|

||||

done

|

||||

```

|

||||

|

||||

This script will also send the backup status to Zabbix. (if you've installed my zabbix-job-status script https://github.com/psy0rz/stuff/tree/master/zabbix-jobs)

|

||||

Please look at our wiki to [Get started](https://github.com/psy0rz/zfs_autobackup/wiki).

|

||||

|

||||

# Sponsor list

|

||||

|

||||

|

||||

@ -1,6 +1,6 @@

|

||||

colorama

|

||||

argparse

|

||||

coverage==4.5.4

|

||||

coverage

|

||||

python-coveralls

|

||||

unittest2

|

||||

mock

|

||||

|

||||

3

setup.py

3

setup.py

@ -18,7 +18,8 @@ setuptools.setup(

|

||||

entry_points={

|

||||

'console_scripts':

|

||||

[

|

||||

'zfs-autobackup = zfs_autobackup:cli',

|

||||

'zfs-autobackup = zfs_autobackup.ZfsAutobackup:cli',

|

||||

'zfs-autoverify = zfs_autobackup.ZfsAutoverify:cli',

|

||||

]

|

||||

},

|

||||

packages=setuptools.find_packages(),

|

||||

|

||||

6

tests/autoruntests

Executable file

6

tests/autoruntests

Executable file

@ -0,0 +1,6 @@

|

||||

#!/bin/bash

|

||||

|

||||

#NOTE: run from top directory

|

||||

|

||||

find tests/*.py zfs_autobackup/*.py| entr -r ./tests/run_tests $@

|

||||

|

||||

@ -1,4 +1,6 @@

|

||||

|

||||

# To run tests as non-root, use this hack:

|

||||

# chmod 4755 /usr/sbin/zpool /usr/sbin/zfs

|

||||

|

||||

import subprocess

|

||||

import random

|

||||

@ -9,6 +11,7 @@ import subprocess

|

||||

import time

|

||||

from pprint import *

|

||||

from zfs_autobackup.ZfsAutobackup import *

|

||||

from zfs_autobackup.ZfsAutoverify import *

|

||||

from mock import *

|

||||

import contextlib

|

||||

import sys

|

||||

@ -58,7 +61,9 @@ def redirect_stderr(target):

|

||||

|

||||

def shelltest(cmd):

|

||||

"""execute and print result as nice copypastable string for unit tests (adds extra newlines on top/bottom)"""

|

||||

ret=(subprocess.check_output(cmd , shell=True).decode('utf-8'))

|

||||

|

||||

ret=(subprocess.check_output("SUDO_ASKPASS=./password.sh sudo -A "+cmd , shell=True).decode('utf-8'))

|

||||

|

||||

print("######### result of: {}".format(cmd))

|

||||

print(ret)

|

||||

print("#########")

|

||||

|

||||

5

tests/run_test

Executable file

5

tests/run_test

Executable file

@ -0,0 +1,5 @@

|

||||

#!/bin/bash

|

||||

|

||||

#run one test. start from main directory

|

||||

|

||||

python -m unittest discover tests $@ -vvvf

|

||||

@ -19,7 +19,7 @@ if ! [ -e /root/.ssh/id_rsa ]; then

|

||||

fi

|

||||

|

||||

|

||||

coverage run --source zfs_autobackup -m unittest discover -vvvvf $SCRIPTDIR $@ 2>&1

|

||||

coverage run --branch --source zfs_autobackup -m unittest discover -vvvvf $SCRIPTDIR $@ 2>&1

|

||||

EXIT=$?

|

||||

|

||||

echo

|

||||

|

||||

123

tests/test_cmdpipe.py

Normal file

123

tests/test_cmdpipe.py

Normal file

@ -0,0 +1,123 @@

|

||||

from basetest import *

|

||||

from zfs_autobackup.CmdPipe import CmdPipe,CmdItem

|

||||

|

||||

|

||||

class TestCmdPipe(unittest2.TestCase):

|

||||

|

||||

def test_single(self):

|

||||

"""single process stdout and stderr"""

|

||||

p=CmdPipe(readonly=False, inp=None)

|

||||

err=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["ls", "-d", "/", "/", "/nonexistent"], stderr_handler=lambda line: err.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,2)))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err, ["ls: cannot access '/nonexistent': No such file or directory"])

|

||||

self.assertEqual(out, ["/","/"])

|

||||

self.assertIsNone(executed)

|

||||

|

||||

def test_input(self):

|

||||

"""test stdinput"""

|

||||

p=CmdPipe(readonly=False, inp="test")

|

||||

err=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["cat"], stderr_handler=lambda line: err.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,0)))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err, [])

|

||||

self.assertEqual(out, ["test"])

|

||||

self.assertIsNone(executed)

|

||||

|

||||

def test_pipe(self):

|

||||

"""test piped"""

|

||||

p=CmdPipe(readonly=False)

|

||||

err1=[]

|

||||

err2=[]

|

||||

err3=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["echo", "test"], stderr_handler=lambda line: err1.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,0)))

|

||||

p.add(CmdItem(["tr", "e", "E"], stderr_handler=lambda line: err2.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,0)))

|

||||

p.add(CmdItem(["tr", "t", "T"], stderr_handler=lambda line: err3.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,0)))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err1, [])

|

||||

self.assertEqual(err2, [])

|

||||

self.assertEqual(err3, [])

|

||||

self.assertEqual(out, ["TEsT"])

|

||||

self.assertIsNone(executed)

|

||||

|

||||

#test str representation as well

|

||||

self.assertEqual(str(p), "(echo test) | (tr e E) | (tr t T)")

|

||||

|

||||

def test_pipeerrors(self):

|

||||

"""test piped stderrs """

|

||||

p=CmdPipe(readonly=False)

|

||||

err1=[]

|

||||

err2=[]

|

||||

err3=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["ls", "/nonexistent1"], stderr_handler=lambda line: err1.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,2)))

|

||||

p.add(CmdItem(["ls", "/nonexistent2"], stderr_handler=lambda line: err2.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,2)))

|

||||

p.add(CmdItem(["ls", "/nonexistent3"], stderr_handler=lambda line: err3.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,2)))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err1, ["ls: cannot access '/nonexistent1': No such file or directory"])

|

||||

self.assertEqual(err2, ["ls: cannot access '/nonexistent2': No such file or directory"])

|

||||

self.assertEqual(err3, ["ls: cannot access '/nonexistent3': No such file or directory"])

|

||||

self.assertEqual(out, [])

|

||||

self.assertIsNone(executed)

|

||||

|

||||

def test_exitcode(self):

|

||||

"""test piped exitcodes """

|

||||

p=CmdPipe(readonly=False)

|

||||

err1=[]

|

||||

err2=[]

|

||||

err3=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["bash", "-c", "exit 1"], stderr_handler=lambda line: err1.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,1)))

|

||||

p.add(CmdItem(["bash", "-c", "exit 2"], stderr_handler=lambda line: err2.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,2)))

|

||||

p.add(CmdItem(["bash", "-c", "exit 3"], stderr_handler=lambda line: err3.append(line), exit_handler=lambda exit_code: self.assertEqual(exit_code,3)))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err1, [])

|

||||

self.assertEqual(err2, [])

|

||||

self.assertEqual(err3, [])

|

||||

self.assertEqual(out, [])

|

||||

self.assertIsNone(executed)

|

||||

|

||||

def test_readonly_execute(self):

|

||||

"""everything readonly, just should execute"""

|

||||

|

||||

p=CmdPipe(readonly=True)

|

||||

err1=[]

|

||||

err2=[]

|

||||

out=[]

|

||||

|

||||

def true_exit(exit_code):

|

||||

return True

|

||||

|

||||

p.add(CmdItem(["echo", "test1"], stderr_handler=lambda line: err1.append(line), exit_handler=true_exit, readonly=True))

|

||||

p.add(CmdItem(["echo", "test2"], stderr_handler=lambda line: err2.append(line), exit_handler=true_exit, readonly=True))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err1, [])

|

||||

self.assertEqual(err2, [])

|

||||

self.assertEqual(out, ["test2"])

|

||||

self.assertTrue(executed)

|

||||

|

||||

def test_readonly_skip(self):

|

||||

"""one command not readonly, skip"""

|

||||

|

||||

p=CmdPipe(readonly=True)

|

||||

err1=[]

|

||||

err2=[]

|

||||

out=[]

|

||||

p.add(CmdItem(["echo", "test1"], stderr_handler=lambda line: err1.append(line), readonly=False))

|

||||

p.add(CmdItem(["echo", "test2"], stderr_handler=lambda line: err2.append(line), readonly=True))

|

||||

executed=p.execute(stdout_handler=lambda line: out.append(line))

|

||||

|

||||

self.assertEqual(err1, [])

|

||||

self.assertEqual(err2, [])

|

||||

self.assertEqual(out, [])

|

||||

self.assertTrue(executed)

|

||||

|

||||

@ -13,17 +13,17 @@ class TestZfsNode(unittest2.TestCase):

|

||||

def test_destroymissing(self):

|

||||

|

||||

#initial backup

|

||||

with patch('time.strftime', return_value="10101111000000"): #1000 years in past

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-holds".split(" ")).run())

|

||||

with patch('time.strftime', return_value="test-19101111000000"): #1000 years in past

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-holds".split(" ")).run())

|

||||

|

||||

with patch('time.strftime', return_value="20101111000000"): #far in past

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-holds --allow-empty".split(" ")).run())

|

||||

with patch('time.strftime', return_value="test-20101111000000"): #far in past

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-holds --allow-empty".split(" ")).run())

|

||||

|

||||

|

||||

with self.subTest("Should do nothing yet"):

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

self.assertNotIn(": Destroy missing", buf.getvalue())

|

||||

@ -36,11 +36,11 @@ class TestZfsNode(unittest2.TestCase):

|

||||

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf), redirect_stderr(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

#should have done the snapshot cleanup for destoy missing:

|

||||

self.assertIn("fs1@test-10101111000000: Destroying", buf.getvalue())

|

||||

self.assertIn("fs1@test-19101111000000: Destroying", buf.getvalue())

|

||||

|

||||

self.assertIn("fs1: Destroy missing: Still has children here.", buf.getvalue())

|

||||

|

||||

@ -54,7 +54,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf):

|

||||

#100y: lastest should not be old enough, while second to latest snapshot IS old enough:

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 100y".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 100y".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

self.assertIn(": Waiting for deadline", buf.getvalue())

|

||||

@ -62,7 +62,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

#past deadline, destroy

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 1y".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 1y".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

self.assertIn("sub: Destroying", buf.getvalue())

|

||||

@ -75,7 +75,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

|

||||

@ -90,7 +90,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf), redirect_stderr(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

#now tries to destroy our own last snapshot (before the final destroy of the dataset)

|

||||

@ -105,7 +105,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf), redirect_stderr(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

#should have done the snapshot cleanup for destoy missing:

|

||||

@ -113,7 +113,7 @@ class TestZfsNode(unittest2.TestCase):

|

||||

|

||||

with OutputIO() as buf:

|

||||

with redirect_stdout(buf), redirect_stderr(buf):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --no-progress --verbose --no-snapshot --destroy-missing 0s".split(" ")).run())

|

||||

|

||||

print(buf.getvalue())

|

||||

#on second run it sees the dangling ex-parent but doesnt know what to do with it (since it has no own snapshot)

|

||||

@ -130,6 +130,6 @@ test_target1/test_source1

|

||||

test_target1/test_source2

|

||||

test_target1/test_source2/fs2

|

||||

test_target1/test_source2/fs2/sub

|

||||

test_target1/test_source2/fs2/sub@test-10101111000000

|

||||

test_target1/test_source2/fs2/sub@test-19101111000000

|

||||

test_target1/test_source2/fs2/sub@test-20101111000000

|

||||

""")

|

||||

|

||||

193

tests/test_encryption.py

Normal file

193

tests/test_encryption.py

Normal file

@ -0,0 +1,193 @@

|

||||

from zfs_autobackup.CmdPipe import CmdPipe

|

||||

from basetest import *

|

||||

import time

|

||||

|

||||

# We have to do a LOT to properly test encryption/decryption/raw transfers

|

||||

#

|

||||

# For every scenario we need at least:

|

||||

# - plain source dataset

|

||||

# - encrypted source dataset

|

||||

# - plain target path

|

||||

# - encrypted target path

|

||||

# - do a full transfer

|

||||

# - do a incremental transfer

|

||||

|

||||

# Scenarios:

|

||||

# - Raw transfer

|

||||

# - Decryption transfer (--decrypt)

|

||||

# - Encryption transfer (--encrypt)

|

||||

# - Re-encryption transfer (--decrypt --encrypt)

|

||||

|

||||

class TestZfsEncryption(unittest2.TestCase):

|

||||

|

||||

|

||||

def setUp(self):

|

||||

prepare_zpools()

|

||||

|

||||

try:

|

||||

shelltest("zfs get encryption test_source1")

|

||||

except:

|

||||

self.skipTest("Encryption not supported on this ZFS version.")

|

||||

|

||||

def prepare_encrypted_dataset(self, key, path, unload_key=False):

|

||||

|

||||

# create encrypted source dataset

|

||||

shelltest("rm /tmp/zfstest.key 2>/dev/null;true")

|

||||

shelltest("echo {} > /tmp/zfstest.key".format(key))

|

||||

shelltest("zfs create -o keylocation=file:///tmp/zfstest.key -o keyformat=passphrase -o encryption=on {}".format(path))

|

||||

|

||||

if unload_key:

|

||||

shelltest("zfs unmount {}".format(path))

|

||||

shelltest("zfs unload-key {}".format(path))

|

||||

|

||||

# r=shelltest("dd if=/dev/zero of=/test_source1/fs1/enc1/data.txt bs=200000 count=1")

|

||||

|

||||

def test_raw(self):

|

||||

"""send encrypted data unaltered (standard operation)"""

|

||||

|

||||

self.prepare_encrypted_dataset("11111111", "test_source1/fs1/encryptedsource")

|

||||

self.prepare_encrypted_dataset("11111111", "test_source1/fs1/encryptedsourcekeyless", unload_key=True) # raw mode shouldn't need a key

|

||||

self.prepare_encrypted_dataset("22222222", "test_target1/encryptedtarget")

|

||||

|

||||

with patch('time.strftime', return_value="test-20101111000000"):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-progress --allow-empty --exclude-received".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1/encryptedtarget --verbose --no-progress --no-snapshot --exclude-received".split(" ")).run())

|

||||

|

||||

with patch('time.strftime', return_value="test-20101111000001"):

|

||||

self.assertFalse(ZfsAutobackup("test test_target1 --verbose --no-progress --allow-empty --exclude-received".split(" ")).run())

|

||||

self.assertFalse(ZfsAutobackup("test test_target1/encryptedtarget --verbose --no-progress --no-snapshot --exclude-received".split(" ")).run())

|

||||

|

||||

r = shelltest("zfs get -r -t filesystem encryptionroot test_target1")

|

||||

self.assertMultiLineEqual(r,"""

|

||||

NAME PROPERTY VALUE SOURCE

|

||||

test_target1 encryptionroot - -

|

||||

test_target1/encryptedtarget encryptionroot test_target1/encryptedtarget -

|

||||

test_target1/encryptedtarget/test_source1 encryptionroot test_target1/encryptedtarget -

|

||||

test_target1/encryptedtarget/test_source1/fs1 encryptionroot - -

|

||||

test_target1/encryptedtarget/test_source1/fs1/encryptedsource encryptionroot test_target1/encryptedtarget/test_source1/fs1/encryptedsource -

|

||||

test_target1/encryptedtarget/test_source1/fs1/encryptedsourcekeyless encryptionroot test_target1/encryptedtarget/test_source1/fs1/encryptedsourcekeyless -

|

||||

test_target1/encryptedtarget/test_source1/fs1/sub encryptionroot - -

|

||||

test_target1/encryptedtarget/test_source2 encryptionroot test_target1/encryptedtarget -

|

||||

test_target1/encryptedtarget/test_source2/fs2 encryptionroot test_target1/encryptedtarget -

|

||||

test_target1/encryptedtarget/test_source2/fs2/sub encryptionroot - -

|

||||

test_target1/test_source1 encryptionroot - -

|

||||

test_target1/test_source1/fs1 encryptionroot - -

|

||||

test_target1/test_source1/fs1/encryptedsource encryptionroot test_target1/test_source1/fs1/encryptedsource -

|

||||

test_target1/test_source1/fs1/encryptedsourcekeyless encryptionroot test_target1/test_source1/fs1/encryptedsourcekeyless -

|

||||

test_target1/test_source1/fs1/sub encryptionroot - -

|

||||

test_target1/test_source2 encryptionroot - -

|

||||

test_target1/test_source2/fs2 encryptionroot - -

|

||||

test_target1/test_source2/fs2/sub encryptionroot - -

|

||||

""")

|

||||

|

||||

def test_decrypt(self):

|

||||

"""decrypt data and store unencrypted (--decrypt)"""

|

||||

|

||||

self.prepare_encrypted_dataset("11111111", "test_source1/fs1/encryptedsource")

|

||||

self.prepare_encrypted_dataset("22222222", "test_target1/encryptedtarget")

|

||||

|

||||

with patch('time.strftime', return_value="test-20101111000000"):

|

||||